Home About us Contact us Training Optimisation Services Protuner Educational PDFs Loop Signatures Case Histories

Michael Brown Control Engineering CC

Practical Process Control Training & Loop Optimisation

Bad Actors

Alarm management has become a subject of interest in many plants. With the advent of computerised control and HMI systems, it has become extremely easy to put alarms onto control loops. Nowadays operators are often subjected to a surfeit of alarms. As a result they tend to eventually ignore most of them, and it is often hard for them to determine which alarms are really serious.

A refinery in which I recently optimised some control loops decided to institute a study of the alarms and rationalise them. Part of the study was to identify the ones that occurred most commonly, and try and improve the controls to stop alarms occurring frequently under normal process conditions. The loops producing these alarms are termed “bad actors”.

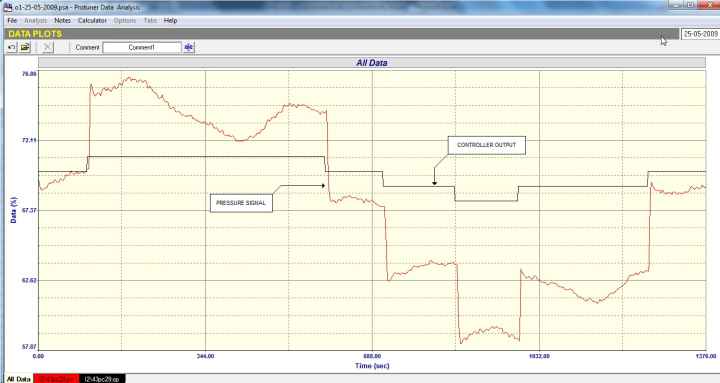

The first example was of a pressure loop which had been optimised properly in 2009. Figure 1 shows the original open loop test which was performed at that time for analysis and tuning. Although the test indicates that the pressure tends to vary around quite a bit with a constant controller output, the variations are probably due to other loops interacting with it. It should be noted that equal size steps in PD (controller output) produced steps on the PV which were also equal in magnitude. This indicates that the valve had linear installed characteristics. The very large steps on the PV produced by relatively small steps on the PD would indicate that the valve was largely oversized

Figure 1

In 2012 the loop was classified as a bad actor, as it gave rise to numerous alarms. It was retuned and worked well for a while, and then started playing up again. This happened several times, and we were asked to investigate why it needed constant retuning.

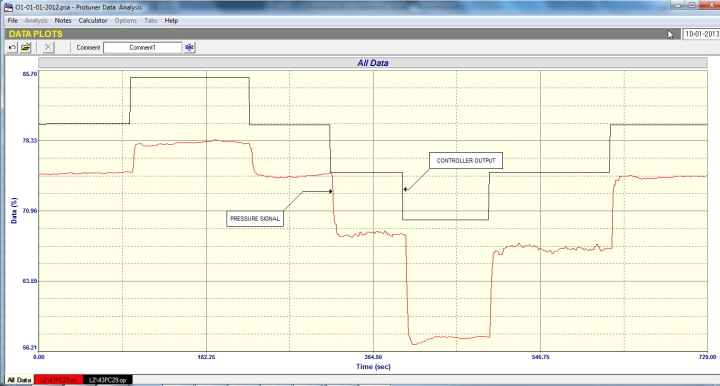

Figure 2

Figure 2 gives the answer. It is of one of the later open loop tests performed in 2013. It can be seen that the dynamics of the process have changed drastically from those of the process at the time of the test in 2009. Seeing as the process itself hadn’t changed it was obvious that the valve had been replaced. This was the first reason that the tuning no longer worked properly. The second thing that can be seen from the test is that although steps of equal magnitude were being made on the PD, the size of the responding steps on the PV changed dramatically in size over the relatively small measurement range where the test was performed. This shows that the valve has very non-linear installed characteristics.

When you tune a controller, you are matching the dynamic characteristics of the controller to those of the process. These characteristics are typically obtained from an open loop step test. If the process’s dynamic characteristics change then the tuning will no longer work, and this is the problem with non-linear installed valve characteristics, particularly if you are working at differing setpoints over the measuring range. You tune at one place and it works well, and if you then change the setpoint for example to a higher value, the control response will change. In the case of this loop, if for example you tune at one place, then the response will get slower and slower the higher you go from that place, and visa-versa it will get faster as you move down, and may even go unstable.

The first thing that needs doing in this case is to linearise the characteristics, which is usually best done in these days of smart positioners by fitting a correctly compensating characteristic curve into the positioner. I am sure that once this has been done and the controller retuned, the loop will be removed from the list of bad actors.

The second example of a bad actor was a slow level loop that was in a continuous cycle with the valve fully opening and closing during the cycle.

Level loops are integrating processes and are notorious for their tendency to cycle. Furthermore very few C&I (Control and Instrument) practitioners really understand the practicalities of integrating processes and have very little idea on how to tune them.

The two main reasons why these processes tend to cycle are due to bad tuning or if the valve in the loop has hysteresis on it. Also one of the main factors that exacerbates the cycling of integrating processes is the integral term in the controller. The purpose of integral is to eliminate control error, and get the process to setpoint. It is a very powerful factor in control action and normally does a great job. However, if for whatever reason it can’t eliminate error, then the integral never gives up, but keeps on trying and carries on pushing the controller output. This leads to problems in control such as reset (integral) windup which can lock-up loops for long periods and cause big overshoots when coming back into control. It also is a major contributor to the cyclic nature of integrating processes.

Integrating processes are in fact better off without the I term in the controllers. Apart from dramatically lessening the tendency of these processes to cycle, the control is actually better. (The reason for this is beyond the scope of this article.) Unfortunately if the I term is not used, then one will almost certainly end up with offset in the control, i.e. the process will settle out away from setpoint. This is generally unacceptable in most controls, and operators get very unhappy if processes don’t ever get to setpoint.

The main characteristic of an integrating processes is the fact that it has internal capacity, and its input and output can differ for long periods. For example in a level process, the level will rise and fall if the input and output flows are not the same. However if they are equal then the level remains constant. Thus the main thing when controlling such a process is to balance the input and output flows when the desired level is attained. This differs from the more common self-regulating type of process like a flow, where if you change the input, the output will also change to equal the input flow.

The open loop analytical and tuning test one performs on an integrating process like a level process in (manual) is started when the input and output flows are balanced. A step change is then made on one of them and then one can determine the dynamics of the process from that test, and then do a tune.

Generally to get such a process into balance the easiest method is to switch off the I term (and D term if it has been used). With a P only control the system is now equivalent to a ball valve which normally finds its own balance. However in this case it just went on cycling. We tried all sorts of things without avail. The last resort one can normally do is to put the controller in manual and try and find the balance point manually. Still no joy. The level just moved up or down.

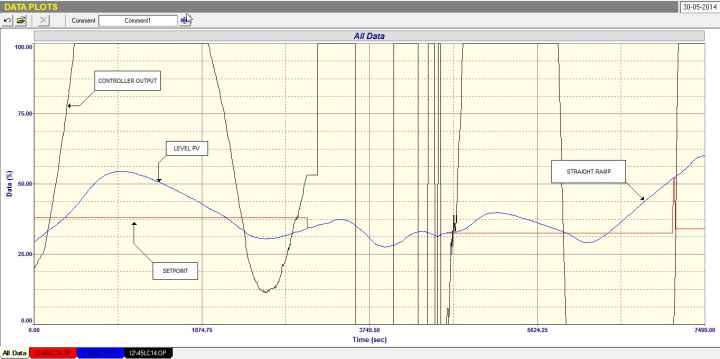

Figure 3

This went on for about a day, without being able to get the level to stay stable. One of the last tests is shown in Figure 3. It was seen on reviewing it that near the end with the PV level dropping, the PD had gone to zero and then the PV started ramping in a very linear fashion.

Now to tune an integrating process one needs to be able to measure the slope of the constant ramp, and divide it by the size of the PD step that caused that ramp. So we had the slope but not the size of PD step, but it was a starting point in calculating tuning. We guessed at various PD step sizes that seemed reasonable, and then tried a tune. To our amazement it worked reasonably well, and for the first time using that tuning and putting the controller into automatic, we managed to stabilise the level, and get some control!

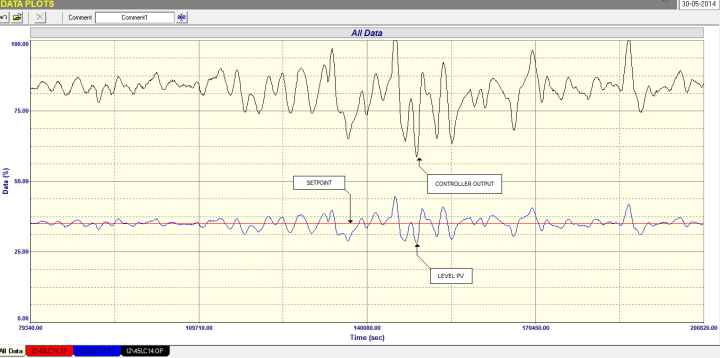

Figure 4

Figure 4 shows a section of the test with the controller in automatic. It can be seen that the controller is really working hard to deal with continuous load disturbances, but the control is pretty good considering the haphazard tuning. It also should be noted that the controller is working pretty close to the top which could indicate that the vale is possibly undersized.

This is certainly the most difficult level loop I have ever dealt with.